As a healthcare IT outsourcing partner, we know exactly how the sales process works — the polished demos, the shiny roadmaps, the reference clients who were carefully selected to match your business situation.

We also know what happens six months after a clinical organization signs with the wrong partner. What follows is the healthcare AI vendor evaluation framework we’d want our own clients to use — including when they’re evaluating us.

Six dimensions. Specific questions to ask in every vendor conversation. And a scoring approach that makes tradeoffs visible before you sign anything.

Key summary

Most clinic leaders choose healthcare AI vendors the wrong way — evaluating on demo quality rather than the factors that determine whether a deployment actually succeeds.

This guide covers the six dimensions that matter: problem clarity, compliance and data security, EHR integration, proven outcomes, total cost of ownership, and team fit. Includes a vendor scoring framework for shortlisting.

Start with your problem

Before you sit through a single demo, get clear on what you’re actually trying to fix.

The clinics that get this right start with a specific, measurable problem — not a category.

“We want to use AI” is not a problem statement. “Every time we add a new clinic location, we need 6 weeks of manual data reconciliation before the system is usable” is.

Falling for a slick presentation without a clear problem in mind is how clinics end up with technology that solves nothing — sophisticated software searching for a use case it was never built around.

You will need to set your success metrics before your first vendor call. If you can’t answer “what does good look like in six months?” you’ll end up evaluating vendors on their demos rather than your outcomes.

Decide what you’re measuring — time saved per physician per week, reduction in billing errors, drop in intake processing time, etc. — before anyone pitches you.

Clinic leaders who anchor every vendor conversation in a defined operational need cut through the noise faster and arrive at better decisions. The goal of this first step isn’t to build a committee. It’s to make sure you’re evaluating AI solutions against your problem, not theirs.

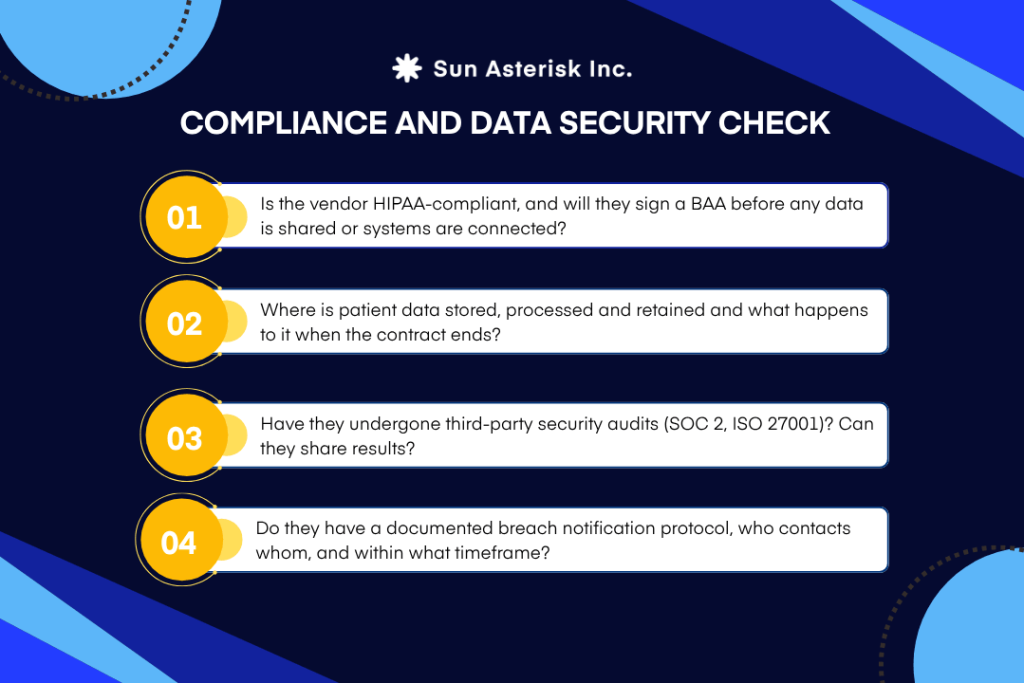

Compliance and data security

This is the filter that should eliminate vendors before the demo — not after. In healthcare, compliance isn’t a feature to evaluate alongside integrations and pricing. It’s a baseline.

If a vendor can’t answer compliance questions clearly and in writing, they shouldn’t make your shortlist, no matter how good the product looks on screen.

We’ve seen it from both sides.

“We’re working on our Business Associate Agreement” is not an acceptable answer. Neither is “we can discuss data residency during onboarding.” If a vendor is asking you to begin integration work before compliance questions are settled, that’s a structural risk — not a process gap they’ll fix later.

Have you fully addressed every part of this question when choosing a healthcare AI vendor?

For clinics expanding into Southeast Asia or Europe, compliance deserves a separate conversation entirely.

A vendor that is fully compliant in the US may have no readiness for PDPA in Thailand, PDPL in Saudi Arabia, or GDPR across the EU. These aren’t minor variations in paperwork — they govern where patient data is stored, how long it’s retained, and what informed consent looks like at the point of care.

Your vendor needs to have solved this already, not be learning it alongside you.

EHR and system integration

Integration is where the gap between a compelling demo and a working deployment is widest. We’ve built enough of these to know: the demo environment is never the real environment.

If the AI requires a separate login, it’s dead on arrival. We said what we said.

Any tool that pulls clinicians out of their primary workflow will be ignored — not eventually, immediately. The AI’s output needs to appear inside the system your clinical team already works in, not alongside it in a second tab.

By 2024, 71% of non-federal acute-care hospitals prioritized predictive AI already embedded directly in their EHR. That number tells you where clinician tolerance sits.

When vendors say “fully integrated,” ask them to be specific. The standard that actually matters is FHIR — it’s the protocol that allows AI to securely pull real-time patient data from your EHR without disrupting clinical workflow.

Integration also means your full stack, not just your EHR.

Depending on your use case, the tool needs to connect with your imaging systems, billing platforms, and scheduling software. Always ask whether those connections exist in production at a comparable site — not whether they’re technically possible.

80% of an AI project is getting data into a usable state. Siloed records, inconsistent formats, and ungoverned data will undermine any vendor’s software before it’s had a chance to run.

We surface this early with every client because it’s the thing that most often determines implementation speed.

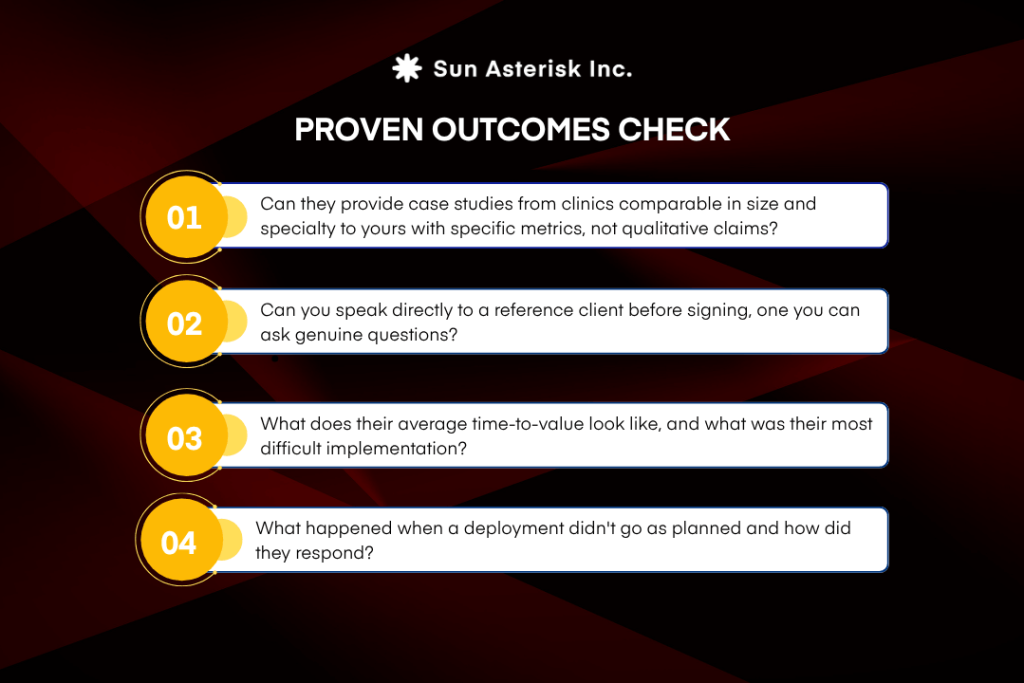

Proven outcomes

The era of “we’re working on case studies” is over. The healthcare AI market has been running real deployments long enough that vendors without specific, measurable results from clinical environments similar to yours represent a meaningfully higher risk than those who can show their track record.

Time saved per clinician per week. Error rate before and after. Cost per task. If a vendor’s case studies are vague — “significantly improved workflow efficiency” without a number attached — that’s a signal worth noting.

How a vendor handles a difficult deployment tells you more than their highlight reel. A partner who can walk you through what went wrong, what they changed, and what the client’s outcome looked like afterwards is a fundamentally different partner than one who has only success stories.

Ask to speak directly to a reference client running the same EHR stack you use — not a reference client selected by the vendor’s sales team.

Ongoing support and total cost of ownership (TCO)

Most clinics underestimate the full cost of deploying healthcare AI — and vendors know it. Initial pricing is structured to look attractive.

The real expense arrives in the months that follow: retraining when your workflows change, updates when the model starts making worse decisions as real-world conditions shift, modifications nobody accounted for, and support needs that weren’t in the original agreement.

AI tools need more ongoing attention than traditional software. Clinical workflows change. Patient populations shift. Regulations get updated.

An AI system that performed well at launch will degrade quietly over time if nobody is actively maintaining it — and the first sign is usually a clinician who stops trusting it.

Ask every vendor specifically how they’ve handled performance degradation with existing clients. Ask what their maintenance model looks like at 12 months, not just at go-live. A vendor without a clear answer to those questions is a risk to your operations, not a partner in improving them.

Team and cultural fit

The best technology fails with the wrong implementation culture. This is especially true in clinical environments, where staff trust is hard-won, and the consequences of a poorly managed rollout are felt for years.

A single enthusiastic champion is a fragile foundation. What works is pairing C-suite sponsors with frontline clinicians, IT, and compliance — early, not at go-live. Shared success metrics agreed before any contract is signed convert enthusiasm into accountability. When everyone has agreed on what “good” looks like, it’s much harder for an underperforming implementation to quietly drift.

Evaluate workflow fit honestly. A tool that adds clicks, extra logins, or new screens to a clinician’s day will be abandoned — not eventually, immediately. Assess whether the solution fits how your team actually works, and gauge readiness for change before implementation begins, not after go-live when the resistance is visible.

Clinicians need to understand what a system can and can’t do, and when its output should be questioned. Build a culture where overriding the AI is treated as a normal, professional judgment call — not an admission that the technology failed. That’s the primary defence against over-reliance on outputs that don’t match the clinical reality in front of them.

Give frontline staff a pathway to surface concerns. Clinicians who are treated as stress-testers rather than end-users — who can see performance data and flag when something feels off — are significantly more likely to trust the system and stay engaged with it.

How to score and compare vendors

When you’re ready to compare your shortlist, use this scoring approach across the six dimensions above. The goal isn’t a definitive number — it’s to make tradeoffs visible before you’re in a contract negotiation.

A vendor that scores strongly on compliance and integration but has no comparable reference clients is a different risk profile from one with a strong track record but vague data privacy answers.

Choose your healthcare AI vendor on outcomes, not demos

We built this framework because we’ve seen what happens on both sides of a bad vendor decision — and because we think clinic leaders deserve a clearer way to evaluate any partner, including us.

If you’re currently shortlisting healthcare AI vendors and want a second opinion on how any candidate measures up against these dimensions, our healthtech team is happy to walk through it with you. No pitch. Just the framework applied to your specific environment.Talk to the Sun* Healthtech Team