Explainable AI in Healthcare is reshaping how hospitals diagnose, treat, and communicate with patients. Yet a troubling gap persists: patients trust their doctors far more than they trust the machine behind the diagnosis and closing that gap requires more than accurate algorithms.

It requires transparency.

Join with us to explore how Explainable AI (XAI) in healthcare closes that gap and how organizations can deploy it today to drive deeper, more meaningful patient engagement.

Key takeaway

- AI adoption in healthcare is accelerating, but patients’ trust lags far behind technology performance.

- Explainable AI (xAI) is the critical bridge between algorithmic accuracy and patient confidence.

- Five practical XAI strategies, from bias transparency to proactive alerts, can transform patient engagement.

- Healthcare organizations that embed XAI into patient-facing tools see measurably higher adherence, satisfaction, and outcomes.

The Rise of AI in Patient-Facing Healthcare

Artificial intelligence has moved beyond the back offices of healthcare IT departments and into the clinical front line. Today it powers patient portals, chatbots, diagnostic tools, and predictive risk engines that millions of people interact with every day.

The rise of patient-facing AI in healthcare is accelerating rapidly as advanced analytics move closer to the point of care. The global AI in healthcare market reached $22.45 billion in 2023 and is projected to grow at a 36.4% CAGR through 2030, reflecting the speed at which health systems are integrating intelligent technologies into clinical workflows.

Patient portals powered by AI are now offering automated triage, personalized wellness nudges, predictive readmission scores, and even conversational chatbots that guide patients through post-discharge care. In diagnostics, deep learning models are reading ECGs, flagging diabetic retinopathy, and triaging radiology queues faster than any human team.

The trajectory is steep, and the performance benchmarks are genuinely impressive. Yet, beneath these remarkable numbers lies a profound and growing tension, one that no accuracy score can resolve on its own.

Why Better Accuracy Doesn’t Always Mean Better Trust

Despite impressive performance metrics, the opacity of many AI systems is creating a growing confidence gap in healthcare, not only among patients, but increasingly among clinicians as well.

For patients, unexplained predictions can feel alarming rather than reassuring. Receiving a notification such as “elevated cancer risk” without any explanation of the underlying factors does not build trust; it creates fear and confusion.

Concerns about AI hallucinations further compound this anxiety. High-profile failures of large language models have heightened public awareness that AI can sometimes generate confident but inaccurate outputs, raising legitimate questions about reliability in clinical contexts.

At the same time, data privacy concerns remain deeply ingrained. Many patients worry that their sensitive health data could be used to train models, shared with insurers, or exposed through security breaches without their knowledge or consent.

Clinicians face their own challenges. Many report increasing alert fatigue and skepticism toward AI recommendations that cannot be easily interrogated, explained, or overridden.

The result is a paradox: AI systems may be technically powerful, but without transparency and control, both patients and clinicians struggle to trust them.

Why Healthcare Needs Explainable AI for Trust?

Trust is not a soft metric in healthcare. It is a clinical outcome driver.

Patients who trust their care team and the tools supporting that team demonstrate significantly higher medication adherence, follow-through on treatment plans, and willingness to engage in preventive care.

Research from the Journal of the American Medical Informatics Association demonstrates that when patients understand the reasoning behind a clinical recommendation, adherence rates improve by 26% compared to opaque algorithmic guidance.

This is the “XAI dividend”: not just transparency for its own sake, but transparency that directly converts into health outcomes.

Healthcare organizations face a specific trust burden that other industries do not. The stakes of misunderstanding are not a bad product recommendation; they are a missed diagnosis, an incorrect medication, a delayed intervention.

In this environment, explainability is not optional. It is a regulatory, ethical, and commercial imperative.

What is Explainable AI in Healthcare?

Explainable Artificial Intelligence (XAI) refers to a set of techniques, methodologies, and design principles that make the outputs of AI systems interpretable and understandable to human users, whether those users are clinicians, administrators, or patients themselves.

In healthcare, XAI bridges the gap between an algorithm’s internal logic (which may involve millions of parameters and non-linear relationships) and a plain-language explanation that a patient can meaningfully evaluate and act upon.

XAI does not require that AI systems become simpler – rather, it provides layered interpretability: the right level of explanation for the right audience at the right moment.

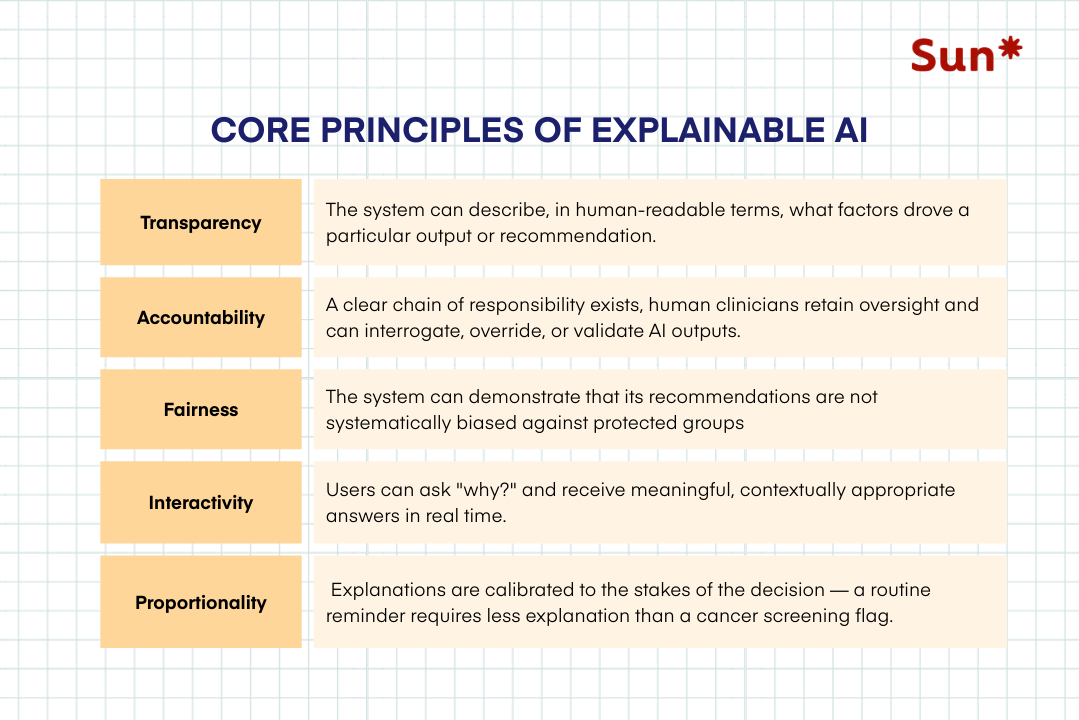

Core principles of Explainable AI in healthcare

To understand how XAI transforms a model from a black box into a trusted collaborator for healthcare professionals, we need to understand the core principles of XAI. They are as follows:

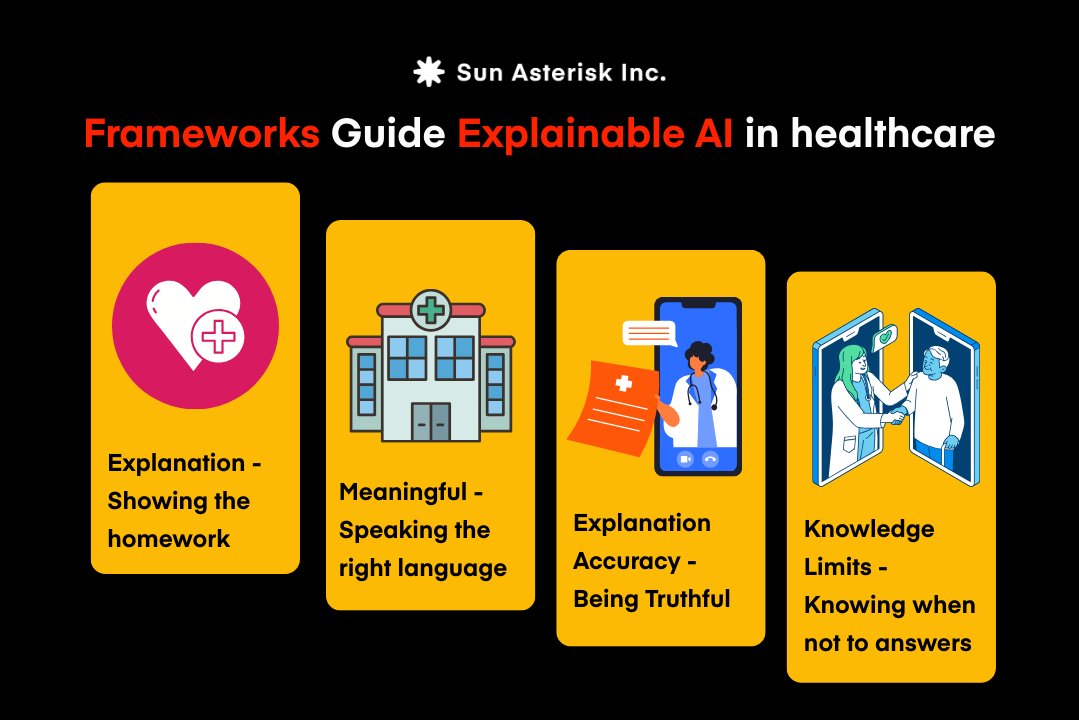

Frameworks Guide Explainable AI in healthcare

For AI to be trusted in healthcare, explainability cannot be an afterthought. It must be built into the design of the system itself. In practice, that means adhering to several foundational principles.

Explanation – Showing the reasoning

An AI system should not simply output a prediction such as “high risk for diabetes.” It should also surface the clinical factors that led to that conclusion. For example, elevated insulin levels, longitudinal glucose patterns, or relevant patient history.

Meaningful communication – Speaking the right language

Explanations must be tailored to the audience. A clinician may require detailed clinical variables and statistical confidence, while a patient needs a clear, plain-language explanation. Both, however, must be able to understand why the model produced a particular result.

Explanation fidelity – Ensuring the reasoning is real

The explanation provided by the model must reflect the true drivers of its prediction. If the explanation is merely a simplified guess or a proxy signal, it undermines both transparency and clinical trust.

Knowledge boundaries – Recognizing limits

Equally important is a model’s ability to acknowledge uncertainty. An AI system designed to assess diabetes risk should not attempt to interpret unrelated conditions. Instead, it must be able to signal when a case falls outside its scope or when the available data is insufficient.

These principles form the foundation of explainable AI. The next step is understanding the methods and techniques that make this transparency possible.

5 ways to use Explainable AI in healthcare to increase Patient Engagement 2.1

1. Building trust through bias transparency

Algorithmic bias remains one of the most significant barriers to patient trust in healthcare AI. The risk is not hypothetical. When models are trained predominantly on data from certain demographic groups, their accuracy can decline for underrepresented populations.

Once patients learn an algorithm may be less reliable for people who look like them, trust doesn’t erode gradually, and so it collapses.

This is where Explainable AI (XAI) becomes more than a technical feature; it becomes a governance mechanism. By making model performance visible, bias can be measured, monitored, and addressed.

Leading healthcare organizations are beginning to operationalize this through bias auditing dashboards that track model performance across demographic groups such as age, gender, and ethnicity. Some are extending this transparency directly to patients through portals that disclose model performance before AI-assisted diagnostics are used.

At the clinical level, automated bias alerts can flag cases where a patient falls into a subgroup with lower model confidence, prompting additional human review.

Increasingly, organizations are also adopting annual bias transparency reports, similar to ESG disclosures, to demonstrate accountability and commitment to equitable AI.

2. Transparent treatment recommendation

When an AI system recommends a course of treatment, the patient’s first question is always: “Why?” Traditional black-box AI cannot answer this. XAI can be applied in healthcare; the ability to answer “why” is the difference between a recommendation that is followed and one that is ignored.

Modern treatment-recommendation systems are beginning to use techniques such as SHAP-based explanations to present patients with clear, ranked factors that influenced a clinical suggestion. Instead of a generic output, patients see the reasoning behind the recommendation.

For example, a diabetes management platform might explain a dosage adjustment by pointing to rising HbA1c levels alongside a recent decline in activity data. Oncology platforms increasingly visualize how genomic markers, imaging findings, and clinical history contribute to a treatment protocol recommendation.

In mental health applications, medication adjustments can be explained through patterns in mood logs, sleep data, and longitudinal PHQ-9 scores.

When patients can see how their own data shaped the recommendation, AI stops feeling like a black box and starts becoming a system they can trust.

3. Personalized risk dashboard

Generic risk communication rarely changes patient behavior. Telling someone they face “a 15% ten-year risk of cardiovascular disease” often creates anxiety, but little motivation. Without context, risk feels abstract and uncontrollable.

Explainable AI (XAI) is beginning to change how risk is communicated. Instead of presenting a single score, XAI-powered dashboards break risk down into its contributing factors, turning a static prediction into a set of modifiable levers.

In cardiovascular care, for example, a risk dashboard might show that blood pressure accounts for a significant share of a patient’s current risk profile, while illustrating how lowering systolic pressure could meaningfully reduce the overall score.

For chronic disease management, nephropathy risk tools can pair kidney function trends with AI projections under different lifestyle scenarios, helping patients visualize how changes in diet or exercise could alter their trajectory.

Mental health platforms are applying similar approaches by linking relapse risk scores to factors such as sleep disruption, medication adherence, or reported stress levels, and often accompanied by practical interventions patients can take immediately.

The shift is subtle but powerful: risk communication moves from passive notification to actionable insight, giving patients a clearer sense of both why the risk exists and how they can influence it.

4. Shared decision-making tools

Shared decision-making (SDM) is widely considered the gold standard for patient-centered care. It is a collaborative process in which clinicians and patients choose a care pathway together, balancing clinical evidence with the patient’s preferences, goals, and tolerance for risk.

Explainable AI (XAI) strengthens this process by ensuring both parties are working from the same transparent evidence. When AI recommendations are interpretable, they become tools for discussion rather than directives to follow.

In practice, XAI-enabled SDM platforms are already emerging across specialties. Orthopedic decision tools, for example, can present patients considering knee replacement with a side-by-side comparison of the factors supporting surgery, such as chronic pain scores, imaging findings, and functional limitations.

They can also highlight indicators favoring continued conservative management, allowing patients and clinicians to weigh both pathways more clearly during the decision-making process.

In oncology, breast cancer SDM tools visualize how genomic test results, tumor staging, and hormone receptor status influence different treatment protocols.

Reproductive health platforms are taking a similar approach, explaining fertility treatment recommendations through individualized biomarker profiles, offering clarity during what is often an emotionally complex decision.

The result is a more informed conversation: AI provides analytical depth, while transparency preserves the patient’s role in the final decision.

5. Explainable proactive alerts and nudges

Preventive care is one of the most effective levers in population health, yet it’s also where patient engagement often fails. Generic reminders like “You are due for your annual mammogram” rarely drive action because they feel impersonal.

Explainable AI (XAI) makes preventive outreach more meaningful by explaining why an alert matters to the individual patient. Instead of a generic notification, patients receive context tied to their own data.

For example, a patient with a family history of colorectal cancer may receive a screening reminder linked to their age, family history, and the timing of their last colonoscopy. A heart failure patient might get an alert triggered by sudden weight gain, with a clear explanation connecting the change to potential fluid retention.

Similarly, hypertensive patients can receive reminders that link missed blood pressure readings to their ongoing medication titration.

The result is a simple shift: alerts become personalized clinical signals rather than generic reminders and patients are far more likely to act on them.

How to implement XAI in healthcare for better patient engagement

The five strategies above are most powerful when XAI is embedded systematically into the healthcare organization’s patient engagement infrastructure. Here’s how we envision XAI reshaping patient engagement across healthcare:

- Start with a Patient-Centric XAI Audit: Map every AI touchpoint in your patient journey. Prioritize for XAI enhancement based on clinical stakes and patient volume.

- Layer Explanation by Audience: Implement tiered explanation models: plain summaries for patients, feature-importance breakdowns for the engaged, full audit trails for clinicians.

- Co-Design with Patients & Clinicians: Conduct user research sessions with patient advisory boards to test explanation formats, language accessibility, and emotional impact.

- Integrate into EHR & Portal Workflows: Embed explanations directly into existing interfaces, surfacing context-sensitive explanations at the moment of patient interaction.

- Establish Continuous Bias Monitoring: Deploy ongoing bias monitoring pipelines that track model performance across demographic subgroups in real time. Publish annual AI transparency reports.

- Train Clinical Teams to Champion XAI: Invest in XAI literacy training. When clinicians can confidently explain AI reasoning, patients trust it too.

Our team helps healthcare organizations design, audit, and deploy XAI systems that meet HIPAA standards, earn patient trust, and drive measurable engagement outcomes.

👉 Schedule a FREE XAI strategy session.